A Totally New World

What is Clawdbot?

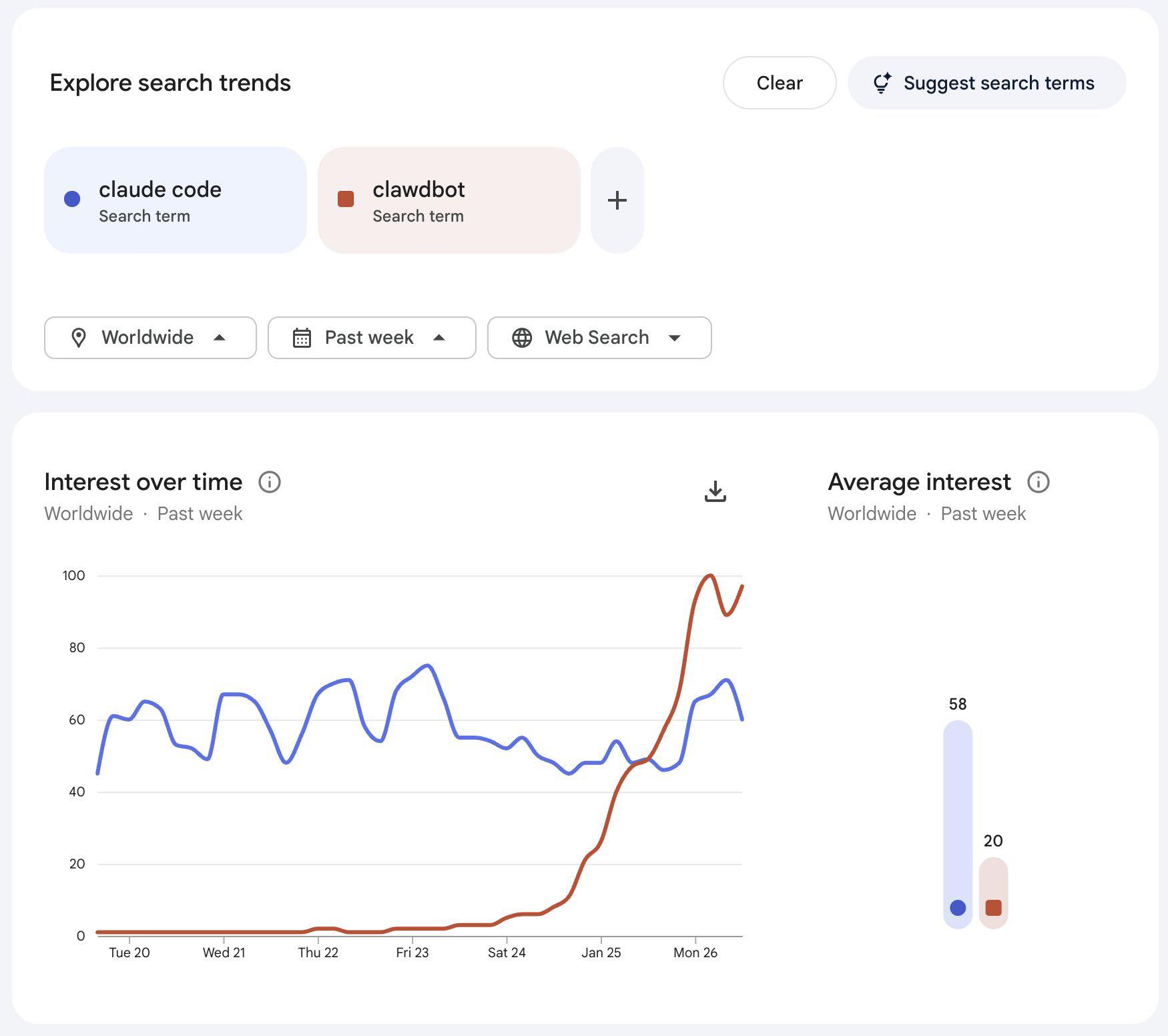

They say a picture is worth a thousand words:

This picture is also worth a lawsuit.

Clawdbot err Moltbot, is a personal AI assistant built by Austrian developer Peter Steinberger. And I think this is what Siri should have been.

The concept is simple: run an AI assistant locally on your own hardware that connects to all your messaging apps (WhatsApp, Telegram, Slack, Discord, iMessage, Signal, Teams). Message it from anywhere. It messages back. It can read your emails, manage your calendar, screen phone calls, book reservations. A digital butler that lives in your pocket.

The project went viral almost overnight. 73,000+ GitHub stars. Engineers and AI enthusiasts flooding X with tips, screenshots, and memes. Array VC's Shruti Gandhi reported getting attacked 7,922 times over the weekend just from running it.

Why This Matters

We've been promised AI assistants for a decade. Siri arrived in 2011. Google Assistant, Alexa, Cortana followed. All of them are locked in walled gardens, limited to what Apple/Google/Amazon decide you can do, and fundamentally designed to serve their platforms first and you second.

Clawdbot flips this. You own it. It runs on your hardware. You choose the AI model (Claude, GPT, whatever). You decide what it can access. No subscription fees to some corporation. No data going to servers you don't control.

This is what open-source AI infrastructure looks like when someone actually builds it right.

The Rebrand Drama

"Clawdbot" is an obvious play on "Claude," Anthropic's AI model. Anthropic apparently agreed, because they sent a trademark request. Steinberger complied, rebranding to "Moltbot" with a space lobster mascot named Molty.

During the chaos of the rebrand, scammers swooped in. They squatted his old X and GitHub handles and launched fake crypto tokens. A "ClawdBot" token hit $16M market cap before crashing 90%. Steinberger had to issue public statements disavowing any crypto connection.

Welcome to building in public in 2026.

The Security Problem

Researcher Jamieson O'Reilly found hundreds of Clawdbot control panels exposed on the public internet: live admin dashboards where anyone could view API keys, configuration data, and full conversation histories from private chats.

The culprit? An authentication bypass when the gateway runs behind an improperly configured reverse proxy. The platform ships without guardrails by default.

It gets worse. O'Reilly published a proof-of-concept supply chain attack on ClawdHub (the skills library). He uploaded a poisoned skill package, artificially inflated the download count to 4,000+, and watched developers from seven countries install it.

Multiple attack vectors are being actively exploited:

- Misconfigured cloud instances leaking API keys

- Moltbot instances connected to X leaking private data through crafted prompts

- No default authentication on control interfaces

This isn't theoretical. This is happening right now.

...or just paste this to your

Why I'm Still Excited

This is exactly the messy, dangerous, exciting frontier that creates real innovation.

Every major platform started with security nightmares. Early web apps were XSS playgrounds. Mobile apps shipped with hardcoded API keys. OAuth implementations were broken for years. We figured it out.

Clawdbot/Moltbot represents something important: the first genuinely compelling open-source AI agent infrastructure. Not a chatbot wrapper. Not an API playground. An actual agent that can do things in the real world through channels people already use.

For tinkerers, this is the moment. The equivalent of the early iPhone jailbreak scene or the Arduino explosion. Yes, you can hurt yourself. Yes, there will be spectacular failures. But this is where the next generation of AI-native tools gets built.

What Comes Next

The project needs:

- Default-secure configurations (opt-out security, not opt-in)

- Signed skill packages with proper verification

- Better documentation for non-expert deployments

- Community security audits

But the foundation is there. The architecture is sound. The vision is correct.

Projects like this where someone built an advanced cognitive inoculation prompt really shows us that we're in a tremendously awesome time and yet still in the very early stages.

Siri was a demo that became a product that became a disappointment. Clawdbot is a tinkerer's toy that might become the actual future of personal AI.

I'm watching closely.

Sources: